Introduction

Mathematical formulas represent one of the most challenging frontiers in document extraction. While standard OCR excels at linear text and language character recognition, mathematical notation introduces spatial complexity that breaks traditional character-by-character approaches. Today, we're excited to announce Pulse's Formula Recognition submodule, a specialized component of our extraction pipeline designed specifically for mathematical content.

The Mathematical Content Challenge

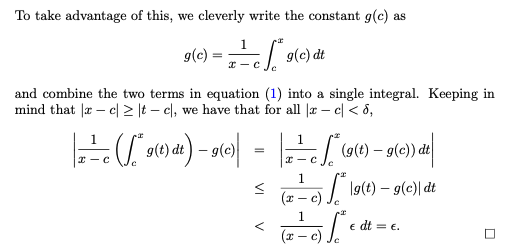

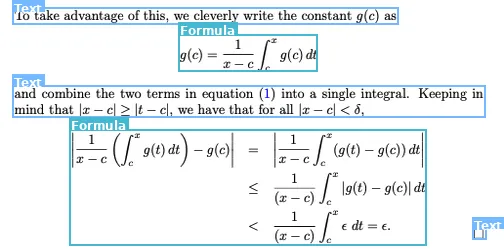

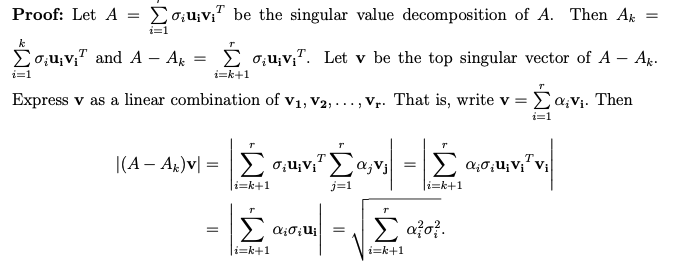

Mathematical notation is fundamentally different from natural language text. Consider the expression:

\[

\frac{\partial^2 f}{\partial x^2} + \lambda \nabla^2 f = 0

\]

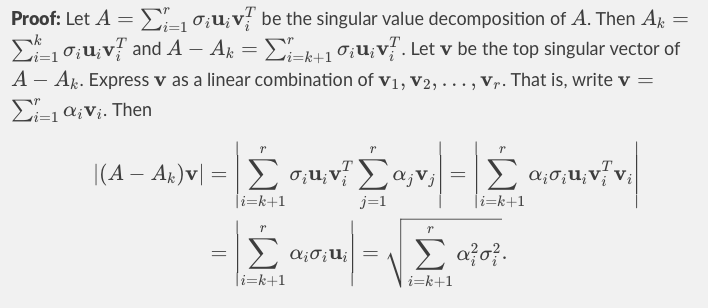

Traditional OCR might see this as a sequence of disconnected symbols: ∂, ², f, /, ∂, x, ², etc. But mathematically, this represents a differential equation where symbol positioning, superscripts, subscripts, and operator precedence carry semantic meaning.

The challenge becomes exponentially more complex with:

- Handwritten papers with inconsistent spacing

- Complex fractions with nested denominators

- Matrix notation with precise alignment requirements

- Multi-line equations with continuation symbols

- Mixed mathematical and textual content in research papers and academia settings

Our Approach: Specialized Vision Architecture

Rather than forcing mathematical content through general-purpose models, we developed a dedicated mini-model within the Pulse pipeline specifically for formula recognition. This approach allows us to:

1. Mathematical Symbol Understanding

Our vision encoder is trained to recognize mathematical symbols as semantic units rather than simple character patterns. The model understands that ∫ is an integral operator requiring specific bounds notation, that Σ implies summation with index variables, and that parentheses in mathematical contexts have precedence implications. The translation into LaTeX syntax is done as a post step.

2. Spatial Relationship Modeling

Mathematical notation relies heavily on spatial positioning. Our model processes formulas as 2D structures, understanding that:

- Superscripts indicate exponents or indices

- Subscripts denote variables or tensor notation

- Fraction bars create hierarchical groupings

- Radical symbols encompass expressions beneath them

The vision encoder uses attention mechanisms specifically tuned for these spatial relationships, allowing it to correctly interpret complex nested structures.

3. Context-Aware LaTeX Generation

Our output layer generates clean, compilable LaTeX code that preserves both the visual appearance and semantic meaning of the original formula. The model has been trained to:

- Choose appropriate LaTeX commands for specific mathematical contexts

- Handle edge cases in notation (e.g., distinguishing between multiplication and cross products)

- Maintain proper grouping and operator precedence

- Generate readable LaTeX that human reviewers can easily verify

Training Data and Methodology

Dataset Construction

As is often the case with such specialized, fine-tuned models, data is the bottleneck. We assembled a training/test corpus of 10M+ formula/LaTeX pairs from diverse sources:

- Academic Papers: Mathematical journals, physics preprints, engineering specifications

- Handwritten Content: Student notebooks, professor lecture notes, research sketches

- Technical Documentation: Standards documents, patent filings, regulatory submissions

- Historical Sources: Scanned mathematical texts, archived research papers

Ground Truth Generation

Creating accurate LaTeX ground truth at scale required a multi-stage verification process, and we worked with many academic labs, researchers, and offshore data labeling teams to make this possible. Our steps included:

- Initial LaTeX compilation verification to ensure syntactic correctness

- Visual rendering comparison between source and generated LaTeX

- Human expert review for mathematical accuracy and semantic preservation

- Cross-validation against multiple LaTeX compilers (pdfTeX, XeTeX, LuaTeX) for robustness

Technical Performance

Accuracy Metrics

It’s incredibly important to build a robust eval pipeline to continuously improve the model on. We’ll give a high-level overview here of this stage, but we have a dedicated open-source benchmark with code snippets in the works to be released soon! We evaluate formula recognition using multiple metrics that capture different aspects of mathematical content extraction:

Symbol-Level Accuracy: Character-by-character comparison of mathematical symbols

Structure Preservation: Hierarchical matching of mathematical expressions

LaTeX Compilation Rate: Percentage of outputs that compile without errors

Semantic Equivalence: Mathematical meaning preservation across different valid LaTeX representations

We tested the mathematical expressions in documents across resolution ranges, with artificial noise, skew, and rotations incorporated to evaluate performance variability.

Real-World Applications

Academic Research Workflows

AI teams and academic labs are already using Pulse's formula recognition for:

- Literature Review Automation: Extracting mathematical models from research papers for systematic comparison

- Knowledge Base Construction: Building searchable databases of mathematical techniques and formulas

- Research Acceleration: Converting handwritten research notes into digital, searchable formats

Performance at Scale

To date, we have processed over 700 million pages through the Pulse pipeline. Mathematical content appears in approximately 15% of academic documents and 8% of technical enterprise documents in our corpus across all customers. Our formula recognition submodule has maintained:

- 99.2% uptime across production deployments

- <100ms average latency per formula recognition operation

- 94% first-pass accuracy on complex mathematical expressions

- 98.7% LaTeX compilation success rate for generated outputs

Looking Forward

Mathematical content extraction represents just one dimension of the specialized model approach we're taking with Pulse. We're actively improving and researching similar submodules for:

- Circuit diagram parsing for electrical engineering specifications and patent documents

- Table extraction and structure recognition for complex financial and regulatory filings

- Chart and graph vision extraction for automatic data digitization from research publications

Get Early Access

We're offering early access to Pulse's Formula Recognition submodule for AI teams, academic labs, and enterprise users working with mathematical content. The response from research institutions has been exceptional - our specialized approach consistently outperforms general OCR solutions on mathematical content by significant margins.

Interested in testing formula recognition with your mathematical content? Contact our team for a demonstration and early access to the submodule.

--

The Pulse team continues to push the boundaries of document extraction accuracy. Our specialized model approach allows us to tackle domain-specific challenges that general-purpose solutions leave unsolved. Formula recognition is just the beginning.